Commentary on “A Response to the Consultation on the Reform to Retail Prices Index (RPI) Methodology”

Commentary on “A Response to the Consultation on the Reform to Retail Prices Index (RPI) Methodology”

Summary:

On the 18th July 2019, Sir Bernard Jenkins reported to the House of Commons the findings of the then recent PACAC inquiry saying “In January 2019, the Economic Affairs Committee of the other place reported that by failing to fix RPI, UKSA risks breaching its statutory duties.”

We believe that report formed the primary impetus for the subsequent consultation and ultimately the Response to the Consultation on the Reform to Retail Prices Index (RPI) Methodology, as published by the Treasury and the UKSA on the 25th November. This document therefore provides the Campaign for Better Statistics’ comments on the first 3 sections of that Response.

In launching the Response, on the 25th November 2020, the National Statistician, Professor Sir Ian Diamond, said:

“The RPI is not fit for purpose and we strongly discourage its use. The Authority’s proposal is designed to address its shortcomings by bringing the methods and data of CPIH into RPI.”

The post of National Statistician was originally established by the Statistics and Registration Service Act of 2007, which act also established the UKSA. Clause 7 of that Act sets out the objectives for the UKSA, including the following:

- In the exercise of its functions under sections 8 to 21 the Board is to have the objective of promoting and safeguarding the production and publication of official statistics that serve the public good.

- In subsection (1) the reference to serving the public good includes in particular—

- informing the public about social and economic matters, and

- assisting in the development and evaluation of public policy

The origin of Sir Bernard Jenkins report of the UKSA’s failure to ‘fix’ the RPI goes back to 2012 / 2013, when the then National Statistician had removed the National Statistic status from the RPI, primarily on the grounds that it did not meet international standards. The United Kingdom being unique in its use of the Carli (arithmetic) averaging of prices, instead of formulae using geometric averaging, the most common of which is the Jevons formula, which subsequently gave rise to the RPIJ as a possible alternative to the RPI[1]. We have been unable to trace the precise reason for the ultimate selection of the CPIH as a replacement for the RPI rather than RPIJ and we note that the meeting of the APCP-T held on 9th October 2020 confirmed to Sir Ian Diamond that there is not presently a consensus internationally in respect of a suitable Index. We assume, therefore that the primary reason for the selection of CPIH, is the manner in which it simplifies the tasks of dealing with the complex issues of price indices designed to meet both domestic needs and International comparisons.

Nevertheless it may be fairly argued that convenience is insufficient justification for this change, as the Chief Executive of the Royal Statistical Society has said “The … plan to replace RPI with CPIH is a clear case of using the wrong tool for the job”.

It therefore seems reasonable that there should be two measures available, one (the macro-economic estimate) designed to satisfy any requirements for International comparison and the other for purely domestic purposes. It is understood that this dual arrangement is used in other countries and is, of course, the de facto arrangement currently in the UK.

Meanwhile, the Campaign for Better Statistics considers that the above findings call into question the basis for the changes that the Authority intends to impose on those who rely upon the existing version of the RPI. The consultation has clearly indicated that the Authority has failed in its duty to “inform the public about social and economic matters” despite having 7 years to explain its reasoning. Moreover it has also failed to make any effective assessment of the impact of the change, relying solely upon the consultation process for that assessment and then effectively ignoring the majority of the replies received.

In our opinion these findings call into further question the governance of the UKSA and therefore the Campaign fully supports the conclusion of PACAC, as stated by Sir Bernard on 18th July last year, namely: “We therefore recommend that UKSA is split into two separate bodies: the Office for National Statistics and the Office for Statistics Regulation.”

A particularly irony of the matter is that Sir Bernard’s expressed concern was that the RPI needed to be ‘fixed’ because it might overstate inflation, causing unnecessary distress to those directly affected by such overestimation – mentioning rail users and students repaying loans in particular. On the other hand, those arguing against change in the most recent consultation were largely concerned that CPIH might under-estimate inflation, leaving many pensioners and others worse off. It may therefore be argued that the UKSA cannot win. But that is a consequence of the act, which assumes that there is such a thing as a purely mathematical, independent measure. Unfortunately, Economics is not devoid of ‘choice’ and we therefore also recommend that the UKSA should in future be placed under some form of genuine democratic control.

Irrespective of those possibilities, we believe the UKSA should provide the following details to ensure a better understanding of the decision by the public.

- The exact reasons why the RPI has been declared unfit for purpose.

- The exact reasons why CPIH is the preferred replacement, including how it rectifies the perceived faults of the RPI.

- Why the consultation does not provide any qualitative or quantitative understanding of the effect that the proposed change will have on users.

Employing language to explain their reasoning that is accessible to the general reader and therefore satisfies the criterion that the RPI exists to meet a social and civic need.

Introduction:

25th November 2020, the day the Chancellor of the Exchequer provided the 2020 spending statement, was also the day selected by the UK Statistical Authority (UKSA) to release the response to the recent Consultation on the RPI (the Response). This commentary is intended to examine that response to ascertain whether the consultation has been fairly conducted or not. I should emphasise that it is not my intention to question the legality of the process, my concern is whether it can be said to have contributed to the strategic objective of the UKSA to provide statistics for the public good, which objective was also specified in the Act of Parliament that created the Authority in 2007.

In the first instance it is worth quoting from the press release issued by the UKSA on the 25th.

The Chancellor has announced that while he sees the statistical arguments of the Authority’s intended approach to reform, in order to minimise the impact of reform on the holders of index-linked gilts, he will be unable to offer his consent to the implementation of such a proposal before the maturity of the final specific index-linked gilt in 2030.In response, Chair of the UK Statistics Authority, Sir David Norgrove, said:

“We are pleased that the Chancellor acknowledges the statistical case for our proposed change to the RPI but regret the Government’s decision that the change should not be made before 2030.”

It is UK Statistics Authority policy to address the shortcomings of the RPI in full at the earliest practical time. The change we propose can legally and practically be made by the Authority in February 2030.

We continue to urge the Government and others to cease to use the RPI, a measure of inflation which the Government itself recognises is not fit for purpose.”

The National Statistician, Professor Sir Ian Diamond, said:

“The RPI is not fit for purpose and we strongly discourage its use. The Authority’s proposal is designed to address its shortcomings by bringing the methods and data of CPIH into RPI.”

All fairly definitive and, indeed, conclusive, but the truth is that this was the position before the consultation, a consultation that was really only concerned with whether the Chancellor would permit the UKSA to proceed with their proposals any earlier than 2030. The UKSA were keen to proceed as soon as possible, the Chancellor was reluctant and the consultation was sought to give guidance on the date of the transfer, not whether it was a good idea or not! The truth is that the 2007 Act creating the UKSA had provided them with unfettered control over both what should be accepted as a National Statistic and the methodology to be employed. The sole exception recognised by the Act was the Retail Price Index, because of its importance to the Government in respect of its responsibilities towards holders of gilts and their value as a source of Government funding. Nevertheless the consultation did cover issues other than the timing of the change and it is therefore worth reviewing the results in detail. In the meantime I am unaware of any government spokesperson having confirmed that the RPI is “a measure of inflation which the Government itself recognises is not fit for purpose.”

Bearing in mind that the RPI measures “the change in the cost of a representative sample of a range of retail goods and services” it must be recognised that how this measurement is achieved can be subject to considerable dispute. We are therefore less concerned with that debate than with the question as to how well the Authority has dealt with other objectives determined by the 2007 Act, in particular clause (2) of Section 7 (Objectives) which states: In subsection (1) the reference to serving the public good includes in particular—

(a) informing the public about social and economic matters

As will be seen, whatever else the consultation has achieved, the result clearly indicates that the Authority has failed to persuade many stakeholders of the need for change, even though it removed the status of National Statistic from the RPI in 2013.

The following provides a relatively detailed commentary on each of the first 3 main sections in order of the Response, beginning with the Overview summarising the main findings. We are also grateful for the advice from Andy King of the Office of National Statistics as provided in the Appendix.

1. Overview:

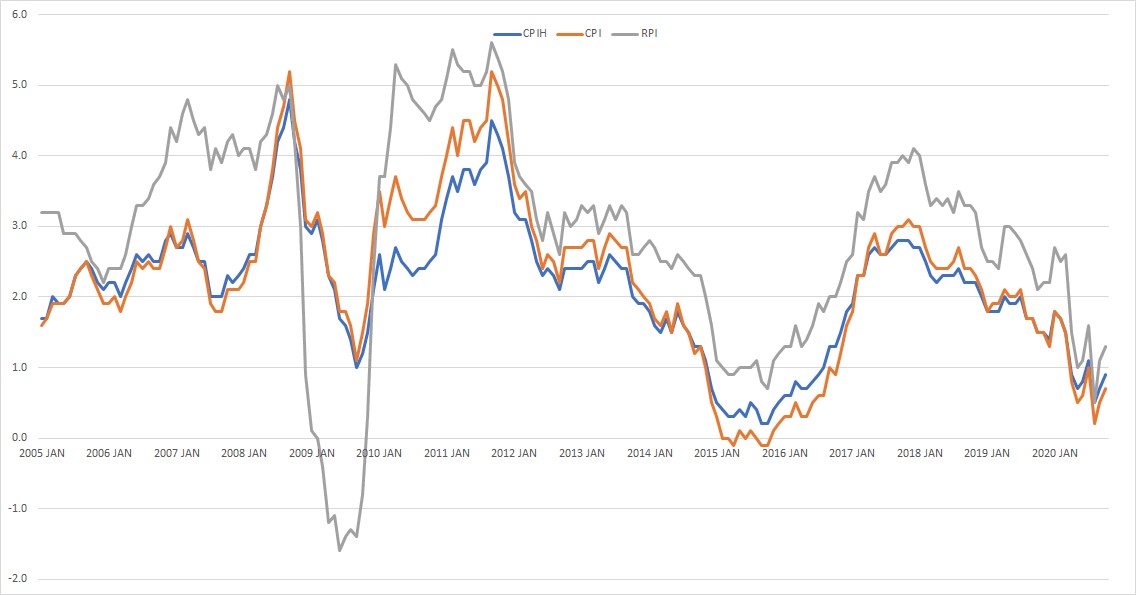

1.1. Background: The initial paragraph of the Response states that the RPI has “at times greatly overestimated, and at other times underestimated the rate of inflation.” Although no examples of these variations are actually provided in the Response, on the 8th March 2018, the ONS published “Shortcomingsof the Retail Prices Index as a measure of inflation” which included the following chart:

I infer from this example that the CPIH is the measure of inflation chosen for the comparison. Although I find the diagram difficult to interpret, it would seem to imply that the RPI measure was approximately 2% higher than CPIH towards the end of 2007, slumping to over 3% lower than CPIH in 2009 and bouncing back to over 2.5% higher again in 2010[2]. It appears that the primary source of those differences was the mortgage interest payments calculated within the RPI, although other factors contributed. Over recent years the difference has settled around the 1% level, primarily caused by the difference due to the use of different formulae (known as the formula effect). For the record, we accept that volatility primarily caused by one element of an index (in this case mortgage interest payments) should not be acceptable, but that might be a reason for altering the balance of the basket, rather than making a wholesale change. Of course, there are other influences at play.

With regard to the consultation there were two other primary concerns beyond the proposed date of the change:

- Consideration of the technical approach to be taken by the UKSA in formally making the change from the RPI to the CPIH, and

- A request for opinions on the broader aspects of the change, beyond those of direct concern to the Chancellor. In particular the consultation sought views on the proposals to discontinue the supplementary and lower level indices presently associated with the RPI.

1.2. How the RPI will change: The Authority confirms its intention to proceed with the changes as described in the consultation document of 11th March 2020. This decision is partially based upon advice from its Technical Advisory Panel for Consumer Price Statistics (APCP-T) which met on the 10th July 2020. Due to the exigencies created by the Covid-19 Pandemic that meeting was held as a teleconference, with an extensive agenda and therefore relatively little time could be devoted to agenda item Consultation on the reform to Retail Prices Index Methodology; accordingly the minutes read:

3.1. Mr Payne gave an overview of the questions asked in the RPI consultation, which related to the statistical robustness of the proposed method for bringing the methods and data sources of the CPIH into the RPI, the current use of supplementary RPI indices and guidance that users would find helpful. The Panel was asked for their views.

3.2. There was a suggestion that, as CPIH doesn’t include mortgage interest, the RPI All Items Excl Mortgage Interest (RPIX) could be set as equal to CPIH However, Mr Payne suggested that users of this sub index often wish to use an RPI that excludes Owner Occupier’s Housing Costs (OOH), in which case CPI could be a more appropriate replacement. Therefore, it would be cleaner to stop RPIX and direct users to the most relevant measure for their needs.

3.3. There was discussion around the difficulty of communicating the legal restrictions around the publication and the nature of the RPI to the public.

3.4. The panel had previously agreed that the method presented in the consultation was the only statistically appropriate approach, as noted in the minutes from the meeting of November 2019.

3.5. A joint Technical Panel response was discussed and ONS agreed to prepare a draft reflecting their views. Whilst panel members agreed on the linking method it was recognised that there were different views around other aspects of the consultation and panel members may wish to opt out of a combined response.

We have been unable to identify the proposed draft paper mentioned under 3.5 and we note that the Authority is primarily relying on the APCP-T meeting of November 2019, as the endorsement for their decision, rather than the more recent consultation.

With regard to the supplementary indices, the Authority took the view that there was little evidence provided in the consultation to merit continuing to publish these or similar indices, once the change to CPIH had been effected. Instead they have undertaken to “provide users with guidance to assist in moving away from RPI related indices.”

1.3. / 1.4. The Chancellor’s view on the timing of the reform: These sections of the Response record the Chancellor’s decisions to:

- Delay the implementation of the proposal to the latest possible date.

- Not provide any compensation for those holding index linked Gilts – because the government will continue to use the RPI until 2030 when they will mature.

1.5. Implementing the Authority’s Proposal: This section records the Authority’s intention to “address the shortcomings of the RPI in full at the earliest practical time”, whilst confirming that it has the legal authority to make the change in 2030.

1.6. The broader impacts of the Authority’s proposal: The Authority acknowledges the evidence that members of defined benefit pension schemes and some other groups are likely to be disadvantaged by the reform. However, the suggestion is that any disadvantage may be minimised by the manner in which the Authority intends to continue to review the various ‘use cases’ as outlined in Measuring Changing Prices and Costs for Consumers and Households , published earlier this year. They also confirm their intention to incorporate new data sources such as scanner data and web scraped data, whilst introducing Household Costs Indices to reflect changing prices and costs as experienced by different household groups. No details are provided as to the expected effects of these innovations.

2. How we consulted

2.1. The Consultation Period: The consultation was launched at the Budget on 11th March 2020 for an initial six week period, to close on the 22nd In the event the pandemic caused the extension to 21st August 2020, which extension enabled the Campaign for Better Statistics to become aware of the matter and to contribute their reply. The authority also ran a user engagement event on 27th July 2020 and attended various other stakeholder events during the consultation period.

2.2. Response to the consultation: 831 replies were received by close of the consultation; 240 represented organisations of one form or another with 591 from individuals (257 of these being members of the British Airways Pension Scheme). As noted in the Response this was more than double the number received for the previous consultation in 2012.

It will be interesting to read the written responses received when published – meanwhile see section 3.2 below for a summary of the responses received.

3. How the RPI will change

3.1. The Consultation: The Response defines the change as follows: “the Authority’s policy is to address the shortcomings of the RPI in full at the earliest legal and practical opportunity by bringing the methods and data sources from the National Statistic, the CPIH into the RPI” and that “the transition should be made in a way that follows best statistical practice”. They go on to confirm that “therefore the RPI index values will be calculated using the same data sources as are used for the CPIH….. It is also the ONS’s intention to stop publishing supplementary indices such as the RPIX…”

It is evident that, in conducting the consultation, the Authority was less concerned with the fundamentals of these objectives than with ‘best statistical practice’, a duty also imposed upon them by the 2007 act. Since a major reason for the consultation was to ensure that the RPI could be returned to the status of a National Statistic, it is of some value to understand why the RPI originally lost that status and why it is now defined by the National Statistician as being ‘not fit for purpose’.

As mentioned above, there had been a consultation in respect of the RPI held in 2012. The then National Statistician had been prompted by the need to address the gap between the RPI and the CPI and work by the ONS had suggested that the use of the Carli method for aggregating price change data at the very detailed level in the RPI did not meet current international standards.

That consultation had offered consideration of 4 different options for the RPI. Briefly these were:

- No change to the existing RPI (at that time a National Statistic).

- Make one change for the averaging formula to the Clothing element of the RPI.

- Make one change for the averaging formula for all elements of the basket of goods

- Change the RPI so that its formulae align fully with those used for the CPI.

The primary other issue proposed for the consultation was the question of the most appropriate data source for private housing rental prices?

Although the overwhelming majority of replies received to the consultation opted for no change (332 out of 352 expressing a choice), in January 2013 the then National Statistician concluded that “the formula used to produce the RPI does not meet international standards and recommended that a new index be published”.

However, the National Statistician also noted that “there is significant value to users in maintaining the continuity of the existing RPI’s long time series without major change, so that it may continue to be used for long-term indexation and for index-linked gilts and bonds in accordance with user expectations”.

So, although the arithmetic formulation would not be chosen were ONS constructing a new price index, the National Statistician recommended that the formulae used at the elementary aggregate level in the RPI should remain unchanged. Nevertheless the RPI lost its status as a National Statistic, because it did not meet international standards. Thus began the long road to the recent consultation and the decision to replace the RPI with CPIH in 2030, although the intention is to continue with the official title of the Retail Price Index.

It should be noted that the 2007 act makes no reference to any requirement to meet International Standards, although it may be argued that in doing so the Authority is fulfilling its obligations under the act in respect of Section 7 to:

(3) Promote and safeguard—

(a) the quality of official statistics,

(b) good practice in relation to official statistics, and

(c) the comprehensiveness of official statistics.

(4) In this Part references to the quality of any official statistics includes—

(a) their impartiality, accuracy and relevance, and

(b) their coherence with other official statistics.

Certainly, as shown below those clauses from the 2007 act are given as the primary justification for the decision. Meanwhile, it has proved difficult to identify the precise source for the comparative international statistic or method that was referred to in 2012; subsequently, in March 2018, the ONS published Shortcomings of the Retail Price Index as a measure of inflation which provided reference to the Practical Guide to Producing Consumer Price Indices, as originally published by the United Nations in 2010. This latter publication states “Although targeted at compilers of CPIs in developing countries, it will also be of practical use to compilers of CPIs in other countries.”

Moreover, the current National Statistician, Sir Ian Diamond attended the meeting of the APCP-T held on 9th October 2020 and confirmed that international work should be utilised where possible and posed to the Panel the question of whether there was a consensus on the best index number method to use. Panel members said that there was not a consensus internationally (see item 3.4 of minutes of 9/10/20).

The Campaign for Better Statistics considers that these findings call into question the whole basis for the changes that the Authority insist upon imposing on those who rely upon the existing version of the RPI, for whatever reason.

3.2. How the change to the RPI will be made: Question 1 of the consultation asked “Do you agree that (the Authority’s) proposed approach is statistically rigorous?” Of the total of 831 responses to the consultation, just 105 agreed that it was rigorous whilst 52 believed it was not rigorous, with the remainder either not responding to that question (523) or not taking a decisive stance.

In fact, by far the majority of responses to the consultation believed that it was too narrow in scope; even a significant proportion of those agreeing the proposed method to be rigorous considered that the question should have been whether the change should be made at all. It must be recognised that, in bringing the consultation the UKSA was responding to some stinging criticism from PACAC in July 2019, when they commented: “PACAC concludes that, through its continued mishandling of RPI, UKSA has allowed what was originally a simple mistake in the collection of price inflation data to snowball into a major unresolved issue lasting for a decade.”

Recalling that a primary statutory objective of the UKSA is to “inform the public about social and economic matters” it is noteworthy that throughout the period since the RPI lost its National Statistic status to the present day there has been no real effort to educate the public on this important issue. Even now, after 7 years, the overwhelming majority of those responding to the consultation express confusion as to the reasons for the decisions of the National Statistician. Equally worrying, throughout the period, the UKSA has failed to make any assessment of the actual effects of the change. Unsurprisingly, those that believe that the CPIH understates inflation and whose pensions are linked to RPI have expressed serious concern and who can blame them?

In reading some of the literature on this subject, one can see logic to the change of formula used for the calculation but, beyond that, there does not appear to be any clear evidence to support the case for CPIH. Instead there are concerns that reliance on a primarily macro-economic measure may not provide a proper reference to the cost of living as experienced by the public. It does, however, save the ONS future work and, possibly, it also provides them with the hope that something akin to CPIH may be adopted by other countries!

3.3. The Authority’s decision on how the change to the RPI will be made. In the light of the above comments we think it appropriate to quote the explanation provided in the Response:

The Authority’s decision-making process on how the methods and data sources of CPIH should be brought to the RPI must be based on statistical considerations. Section 7 of the Act sets out the Authority’s statutory objective of ‘promoting and safeguarding the production and publication of official statistics’. In particular, this relates to ‘the quality of official statistics, good practice in relation to official statistics, and the comprehensiveness of official statistics.’ Quality here directly refers to ‘their impartiality, accuracy and relevance, and their coherence with other official statistics.’

There is no reference to the public good in this statement, nor does there appear to be any recognition that the RPI is an economic statistic and not a purely mathematical one. As many observers have previously commented ‘economics is a social science’, although others have agreed with Thomas Carlyle’s observation that it is the ‘dismal science’! Either way it is evident that the purely statistical argument is a matter for debate – no less than the Royal Statistical Society profoundly disagrees with the decision:

“The government’s plan to replace RPI with CPIH is a clear case of using the wrong tool for the job,’ said RSS chief executive Stian Westlake, ‘CPIH is a totally different measure which produces totally different results and it may have negative consequences for consumers, workers and savers.”

3.4. Guidance for users of the RPI: To quote the Response “The Authority is keen to support users through the transition to CPIH methods and data sources” and further explains the decision to replace the existing supplementary and lower level RPI indices with those available with the CPIH; because the latter conform to COICOP, an internationally recognised Classification of Individual[3] Consumption by Purpose.

The concern that we in the Campaign for Better Statistics have is that the Authority have very little knowledge as to what their commitment to support users through the transition might entail. The consultation had sought responses to each of four key questions. They were:

- What might be the impact in areas or contracts where the RPI is used?

- Are there any other issues of which the Authority or the Chancellor ought to be aware?

- Which lower level or subsidiary indices are currently used, and what are they used for?

- What guidance would users of lower level or subsidiary indices find most useful for the ONS to provide?

The consultation does not provide a reliable guide to the definitive answers to these questions. Moreover the sheer complexity of the potential requirement is fully illustrated by Appendix C of the Response – “Guidance for the users of RPI sub-indices”. This appendix requires more than one third of the whole Response document to explain the various formulae and adjustments and is virtually incomprehensible to all but the most diligent enquirer. The failure of the Authority to engage a wider public in the process suggests to the Campaign for Better Statistics that the UKSA should provide a more coherent explanation for their proposals and that there should be some appeal process to re-evaluate the decision.

—————————————————-

– By Tony Dent, Campaign for Better Statistics (CBS)

Appendix: Correspondence with ONS concerning Difference Between the RPI and the CPIH

From: Andy King [mailto:[email protected]] On Behalf Of CPI Customer Service

Sent: 02 December 2020 18:11

To: Tony Dent

Subject: Enquiry Response – fao James Tucker

Prices Correspondence Reference – Enquiry Number 13481

The following is a response from Prices to your Enquiry:

Prices Correspondence Response:

Dear Mr Dent,

Thank you for your email. I hope you (and your family) are safe and well in the current situation.

James Tucker has moved to a new role in ONS but I am happy to answer your questions. In response:

a) Is the basket of goods the same for the CPIH as for the RPI? If not, how do they differ?

a) The vast majority of the basket of goods and service for the CPIH and RPI overlap however there are some differences, in particular around housing. Both CPIH and RPI include council tax but their approach to other housing costs differ. The CPIH uses a rental equivalence approach (where the rentable value of properties are used) to measure the cost to owner occupiers’. In contrast, the RPI has mortgage interest payments and house depreciation (both of which are dependent on the latest House Price Index) as its measurement of housing costs.

There are other methodology differences but they are more applicable to your second question.

b) Is the actual measurement process used for each item in the basket the same in each case? If not, how do they differ?

In terms of the collection of price data, the vast majority of the collected prices features in both CPIH and RPI. At quote price level, there are some differences which include:

- CPIH includes high-income private households, residents of institutional households and foreign visitors which are excluded from the RPI; and

- taking a monthly average price for petrol and diesel in CPIH while the RPI measures that price at a particular time in the month (i.e. Index day – the second or third Tuesday of the month).

The aggregation methods for CPIH and RPI differ along with the weighting structure which also contribute to difference between the series. A fuller description of the differences between the two measures are outlined in Section 11.2 Index coverage and classification and Appendix 2 of the Consumer Prices Indices Technical Manual, 2019

I have included a link to the Office for Statistics Regulation Assessment Report on the RPI which outlines the decision to remove the RPI’s National Statistics status:

https://osr.statisticsauthority.gov.uk/publication/the-retail-prices-index/

I’ve not looked at the long term historic CPIH, CPI and RPI before but the below graphs are quite interesting. In terms of the long-run series (back to 1989), there are some unusual movements in the RPI between 1994 and 2008, where the three series deviate as the RPI 12-month inflation increases at a far higher rate than CPIH/CPI but then converging again.

In terms of the last 15 years, the volatile movements in RPI are presumably driven by the affect of house prices on Mortgage interest payments and House depreciation items. Certainly, the period of the economic downturn between 2008 and 2010 when house prices fell was the most significant historic period when RPI underestimated inflation.

I hope that I have been able to answer your questions but please do not hesitate to contact me should you have any further questions. Kind regards,

Andy

Andrew King FIMA | Statistician, Head of CPI Production and User Engagement, Prices Division, National Accounts & Economic Statistics

Office for National Statistics | Swyddfa Ystadegau Gwladol

+44 (0)1633 456508 | [email protected] | www.ons.gov.uk| @ONS

Your Enquiry:

Dear Mr.Tucker

I am looking at the publication “Shortcomings of the Retail Prices Index as a measure of Inflation” and I’d be grateful if you would clarify some points for me as follows:

a) Is the basket of goods the same for the CPIH as for the RPI? If not, how do they differ?

b) Is the actual measurementprocess used for each item in the basket the same in each case? If not, how do they differ?

I am of course, familiar with the differences as created by the use of different formulae for each measure.

I am also having some difficulties in tracing the precise reasons for no longer supporting the RPI as a National Statistic in 2013. Can you point me to the details underlying that decision.

Finally, the first paragraph of the recent Response to the Consultation on the Reform to Retail Prices Index (RPI) Methodology states that “it (The RPI) has at times greatly overestimated, and at other times underestimated, the rate of inflation.”

Can you provide any examples of both of these observations and what definition of inflation was used as a comparison?

Yours sincerely,

Tony Dent

Director, Sample Answers & Chairman, CMR Group

[1] The different measures of inflation are explained in Chapter 10 of the CPI Technical Manual as published in 2012 – http://www.ons.gov.uk/ons/guide-method/user-guidance/prices/cpi-and-rpi/consumer-price-indicestechnical-manual.pdf

[2] Please see Appendix A for further historical details on the comparison of the RPI with the CPIH.

[3] Despite the use of the term Individual, the classification does refer to household consumption